Background

Auto-GPT is capable of formulating plans, parsing plans, breaking down plans, executing plans, evaluating execution results, and continuously iterating to produce outcomes while integrating external resources to perform corresponding actions, progressively achieving the goal based on the user’s input objective.

I am very interested in the fact that Auto-GPT’s prompt words update automatically.

Auto-GPT Original Explanation

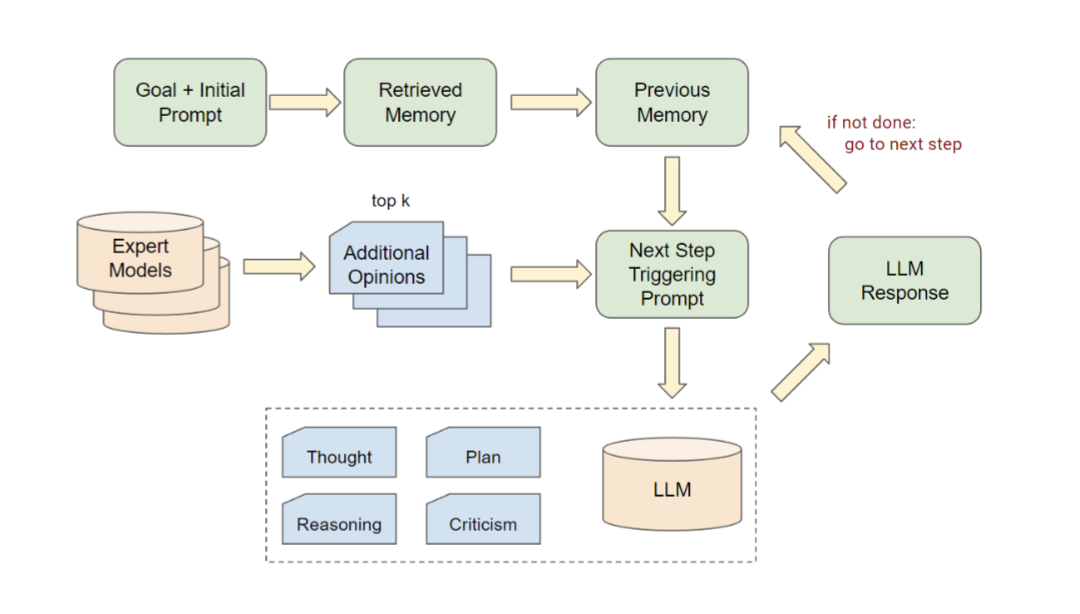

User's goal input + initial system prompt =>

Retrieve Memory database =>

Get previous Memories =>

Formulate plan => Generate (first round/next round) prompt =>

Invoke LLM (ReAct framework) to execute plan =>

Update current state (used to generate next round prompt) =>

Invoke tools or components to execute plan =>

Based on current results, decide whether to continue to “formulate plan” to execute next step or directly Done

Auto-GPT Evaluation

Due to the instability of LLM task decomposition, the prompt words explode in growth during each planning loop. The generated plans also exhibit uncontrollable changes. The Auto-GPT framework is rarely used in commercial agents.

Stable Autonomous Agent Architecture

Goal

↓

Planner

↓

Task Queue

↓

Executor

↓

Evaluator

↓

Memory

↓

Loop

Autonomous Agent Evaluation

Issue: Explanation:

Cost High number of LLM calls

Stability Unstable task planning

Controllability Difficult to debug

Safety Potential to perform incorrect operations

References

“Auto-GPT for Online Decision Making: Benchmarks and Additional Opinions”

https://www.semanticscholar.org/reader/3b8871e4c25d3aaca2bee6606c07bc870337253c